Running Immich on Limited Hardware: My Raspberry Pi Setup

Published February 24, 2026 · 6 min read

I wanted to use Immich for a long time, but for a long time my Homelab was powered by a Raspberry Pi 3b, and that was already struggling with the 15 services I was running on it. I am already storing all my photos on my NAS, but finding any memories is very difficult. So now that I moved from docker on a Raspberry Pie to a 8GB RP5 kubernetes cluster, I do have some capacity left, although not much.

Setup

I deployed the provided Helm chart into it's own namespace. Created a new shared drive on my NAS and mounted it to the pod. Gave it a domainname and made sure it pointed to my internal ingress. If I want to upload photos when I am away, I'll use my VPN.

To get the most out of the features, I also enabled the machine learning container. I've added Authelia SSO login and started testing it. It was lovely with the couple of pictures I've uploaded.

Then I removed the complete namespace again to clean up all the test data and settings, and started fresh for the real deal: All family pictures (around 800Gb).

Importing pictures

There are a couple of ways to do this, most easy way is to keep all the data where it is and mount it as an external library. But since I wanted all new photos to be uploaded to Immich directly, I might as well remove the legacy upload directory at some point and have everything consistent. So I created a API key just for uploading and started with a semi-small directory using the Immich CLI.

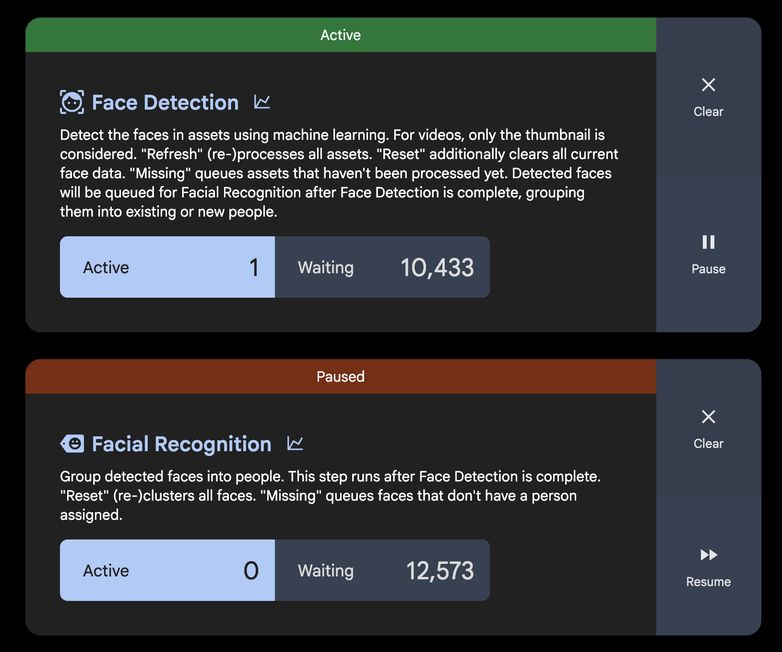

immediately I saw trouble: Immich was not responding, some other services went out and I was difficult to even get some logs out of my host. I restarted the cluster and paused all the queues, just to give me some time. Most importantly was setting some sensible limits to the services, but knowing that Immich needs more resources to process all queues in parallel, I also kept just a couple of queues running while uploading the directory. I manually adjusted what queue was running, to keep Immich responsive.

Knowing I just uploaded a small folder, I knew I had to do something about this. At this point a couple of things stood out:

- Most queues are "optional", like face detection, smart-search, duplicate detection, OCR and video processing. These are used for better queries, or better video delivery. But are very nice to haves.

- Some queues are required when using the web-interface. Like without thumbnail generation, you wont be able to view the photos in the timeline, and without metadata extraction, pictures won't even show up.

- The default queue concurrency is a start, but not on a low-powered PC with many other services running on it.

- I will have to upload my collection in smaller chunks (like 50GB at a time)

- There is an API to update the queues with specific queue permissions in the tokens

I did what everyone would do: automate it.

With N8N, I created a workflow to check the queues every 15 minutes. Every run it takes the statistics for all queues, and when there are pictures to be processed in the "essential" queues, run only those queues and pause the others. Otherwise, keep the metadata extraction enabled, but pause the thumbnail queue and process a single other queue. When the optional queues are empty, keep to the essential queues.

This way it takes quite long, keeping a max delay in thumbnail generation of 15 minutes. But as I will upload a batch every day, the queues will empty out every night.

What I love about queues in software

Except the fact that I can watch the queues go down slowly, it gives a ton of flexibility in scaling and resource planning. Like when having a bigger installation, you could have more concurrency, or even scale the workers separately. It decouples the user-action with the resource intensive process happening on the user-action. This works in cloud-native solutions to prevent sudden bursts of requests to take down an application, and it gives a low-powered device to handle it on it's own pace while still reaching its goals.

I will keep this setup for the time being, as its probably very handy when returning from life events outside of our house where we are taking lots of pictures. (it only uploads on VPN or at home).

Try it yourself!

I've really enjoyed looking into Immich and its amazing feature set. I already moved all my photos away from the privacy unfriendly US companies, but missed the memories, easy scrolling and advanced search that really makes big libraries useful.

You won't need much, like I am running it on a NAS and a raspberry pi. TheLocalStack is running it on a broken laptop and writes about privacy and why keeping data away from big-tech is so important.

Just try it and always create a backup (Especially when staying with big-tech)

FAQ

Aren't you afraid that the SD card will corrupt?

In all my years of working with Raspberry pies (since version 1), never had a SD card go bad, and while some where running in memory only, others where storing data as my media server etcetera. But in the current setup I have a NVME drive with backups to an offsite location. I did a non-planned full restore at some point, so I know that works.

How long did it take to process 800GB of photos?

I'm doing 14 batches, usually a day between batches to let the queues process. So the full import takes about two weeks, but it's all running in the background while I can still use Immich normally.

What are your resource limits set to?

For the Immich server container:

limits:

memory: 2Gi

cpu: 1000m

requests:

memory: 1Gi

cpu: 500m

For the machine learning container:

limits:

memory: 1Gi

cpu: 1000m

requests:

memory: 512Mi

cpu: 250m

Why not just use the external library feature?

I want to keep all my data in the same place, and I wasn't sure how the sharing features would work with external libraries. Importing everything directly gives me confidence that all features will work as intended.

Does the machine learning work well on a Raspberry Pi 5?

It's good enough, it actually surprised me a bit. I used smaller models than the default, but they were very good. The face detection and smart search work well, just slower than on more powerful hardware.

What happens if the upload fails halfway through?

I can just re-run the upload script and it will filter out duplicates, even when uploaded within a different batch. The Immich CLI handles this gracefully.

Can other devices/family members upload while queues are running?

Normally it will just handle it because running a single queue will top around 80% CPU with memory to spare (like 1GB). But when uploading a batch I tend to disable the metadata queue for the first processing, just to speed up the queues.

Share

Share on Mastodon - Share on LinkedIn - Share on Facebook